Azure AD Identity Protection is one of the security tools that usage has been increased a lot during the last years in the environments I have worked with. I have written multiple posts related to it and you can find the older posts (that are still valid) from here

- The latest one from securecloud.blog hosted by my awesome colleague and friend @santasalojoosua

- The sconed one is in my own site “Azure AD Identity Protection Detection Capabilities”

The main purpose of this blog was to test and identify the detection mechanisms of Azure AD Identity Protection (IPC). Kudos to @Thomas_Live for the inspiration!!

Disclaimer – I’m was not able to test all the detections because some of the risks are calculated offline using Microsoft’s internal and external threat intelligence sources.

I made tests only in one of my demo environments. For background information it’s important to understand the Azure AD Identity Protection reporting latency, according to Microsoft:

- Real-time detections may not show up in reporting for five to ten minutes

- Offline detections may not show up in reporting for two to twenty-four hours

Background

Azure AD Identity Protection (IPC) is an Azure AD P2 feature that has been in general availability mode for several years for now. In 2019 Microsoft did ”refresh” for IPC and added new detection capabilities and enhanced UI. Since then some new detection models have been introduced and also deeper integration with Azure AD Conditional Access. With it, you have the possibility to create multiple organizational level “users risk-based” and sign-in risk-based” Conditional Access policies. This is also an approach I recommend when configuring policies for IPC.

Currently, IPC has the following detection mechanisms available. In the table below, there are both, offline and online detections included. A reference list can be found from MS docs. Some of the detections are not listed in docs.microsoft.com but can be found in partner community presentations.

Identity Protection Detection Rules

In this chapter, you will find short descriptions of the detection mechanism, and my test results containing alert details and latency. More information on how you can simulate Identity Protection detection rules.

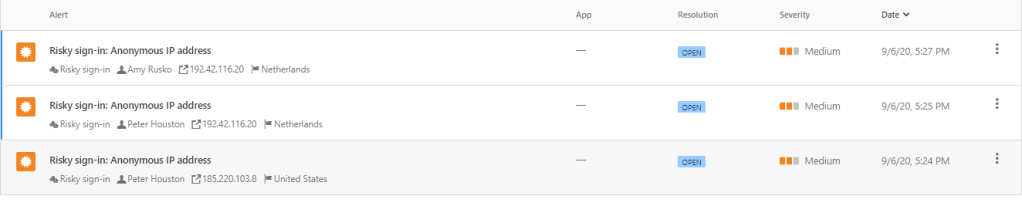

Anonymous IP address (Real-time)

According to Microsoft: “This risk detection type indicates sign-ins from an anonymous IP address (for example, Tor browser or anonymous VPN). These IP addresses are typically used by actors who want to hide their login telemetry (IP address, location, device, etc.) for potentially malicious intent”.

Activity

Test performed via the Tor browser from Azure VM. I used Azure VM located in the West Europe region to perform tests.

Alert and Latency

The detection mechanism is real-time and for that reason, related events were created immediately after activity without any delay.

Tor session activated: Sunday, September 6, 2020 5:24 & 5:25 PM

Detection: Real-time – 09/06/2020, 5:24

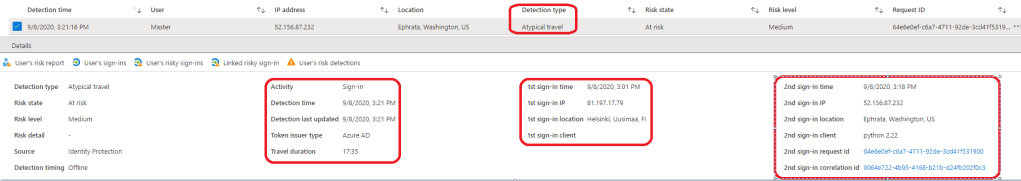

Atypical travel (Offline)

What needs to be considered here is that you need to have enough sign-in data collected. The system has an initial learning period of the earliest of 14 days or 10 logins, during which it learns a new user’s sign-in behavior. If you are testing with the newly created user most probably the alert is not generated because there isn’t’ enough sign-in data available for the detection to work.

According to Microsoft: “This risk detection type identifies two sign-ins originating from geographically distant locations, where at least one of the locations may also be atypical for the user, given past behavior. Among several other factors, this machine learning algorithm takes into account the time between the two sign-ins and the time it would have taken for the user to travel from the first location to the second, indicating that a different user is using the same credentials”.

Activity

- Using the browser, I navigated to https://myapps.microsoft.com

- I entered the credentials of the account

- Changed user agent. I used a custom user agent named “Python 2.22”

- Changed my IP address. I normally login with this user from Finland and for this test, I logged to the US East data center to one of my Azure VM’s.

- From there (Quincy) I logged in to the different portals again (portal.office.com, myapps.microsoft.com & aad.portal.azure.com) with the same credentials as before and within a few minutes after the previous sign-in.

Alert and Latency

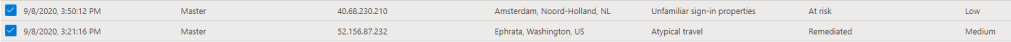

The sign-ins were performed by following manner:

- The first sign-in was performed on – Tuesday, September 8, 2020, 3:01PM

- The second one on – September 8, 2020, 3:18PM

Even though the “Atypical travel” detection is offline it was pretty fast, 3min after the second sign-in from Ephrata, Washington. Have to say that I’m impressed. But as said, enough sign-in data is needed this one to succeed.

- 9/8/2020, 3:21PM

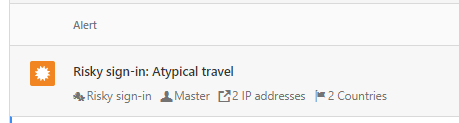

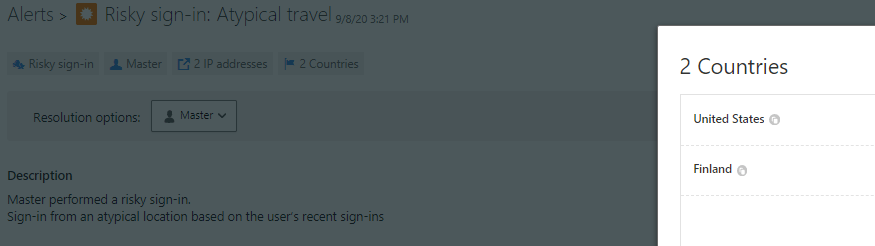

The same alert is also found from Microsoft Cloud App Security because of integration between the products.

Malware Linked IP-Address (Offline)

According to Microsoft: “This risk detection type indicates sign-ins from IP addresses infected with malware that is known to actively communicate with a bot server. This detection is determined by correlating IP addresses of the user’s device against IP addresses that were in contact with a bot server while the bot server was active”.

For me, this would have been too complicated scenario to test and real-life examples are not available for now.

Unfamiliar Sign-in Properties (Real-time)

This risk detection type considers past sign-in history (IP, Latitude / Longitude, and ASN) to look for anomalous sign-ins. The system stores information about previous locations used by a user, and considers these “familiar” locations. This detection type requires (as many others) existing sign-in data from the user. The algorithm needs to have enough information from the user’s usual sign-in patterns and the minimum duration to measure data is five days. Take into account that:

- The learning mode duration is dynamic and depends on how much time it takes the algorithm to gather enough information about the user’s sign-in patterns

- A user can go back into learning mode after a long period of inactivity

- The system also ignores sign-ins from familiar devices and locations that are geographically close to a familiar location

- The system also ignores sign-ins from familiar devices, and locations that are geographically close to a familiar location

Activity

The imaginary malicious actor used the admin account for login in my environment. This user has a lot of sign-in data collected during past years and for that reason alert is triggered immediately when location changes.

Alert and Latency

In Identity Protection we can see user risks, “Atypical travel” and “Unfamiliar sign-in properties”. The first one raised a risk level to medium but was remediated because of IPC policy enforced password change. The latter one is categorized as low-level risk and doesn’t affect to the user authentication process, for now.

Admin confirmed user compromised (Offline)

When an admin after investigation marks user identity as “compromised” the following actions are taken into place:

- Azure AD will move the user risk to High [Risk state = Confirmed compromised

- Risk level = High

- Adds a new detection ‘Admin confirmed user compromised

Alert and Latency

There isn’t a separate alert created based on this activity. Identity Protection status from the user is updated and remediation is needed on the next login.

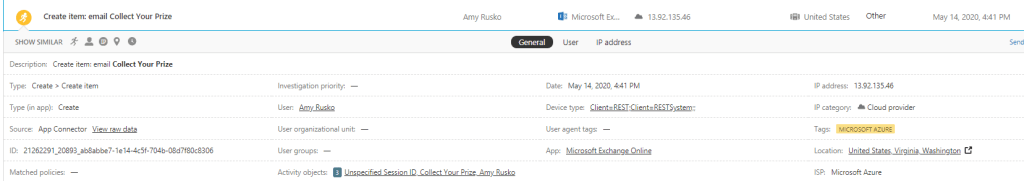

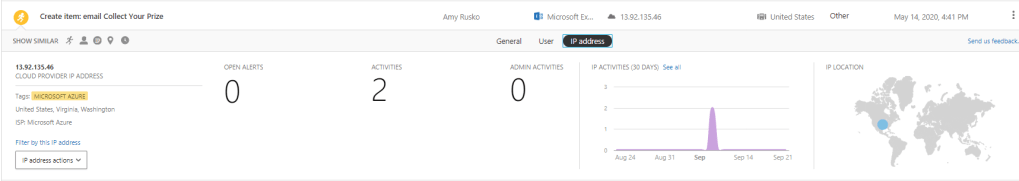

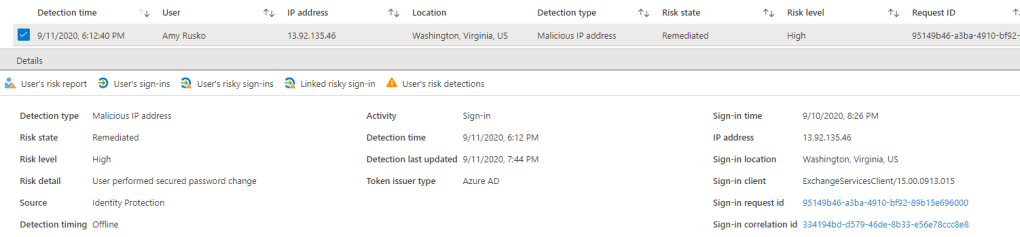

Malicious IP address (Offline)

According to Microsoft: “This detection indicates sign-in from a malicious IP address. An IP address is considered malicious based on high failure rates because of invalid credentials received from the IP address or other IP reputation sources”.

Activity

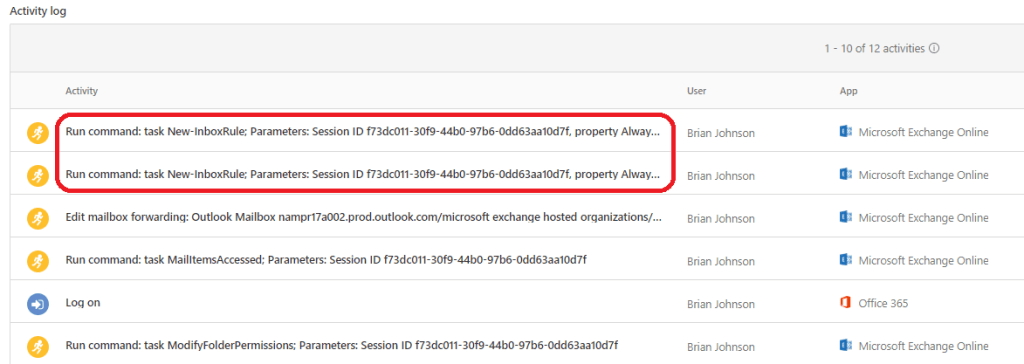

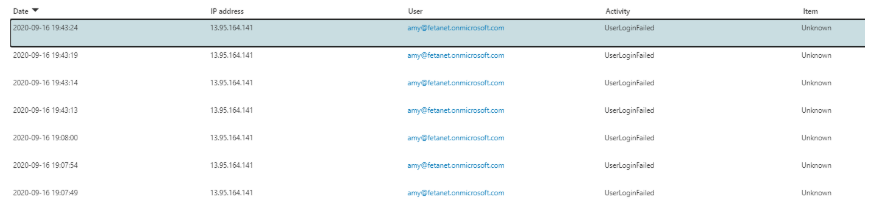

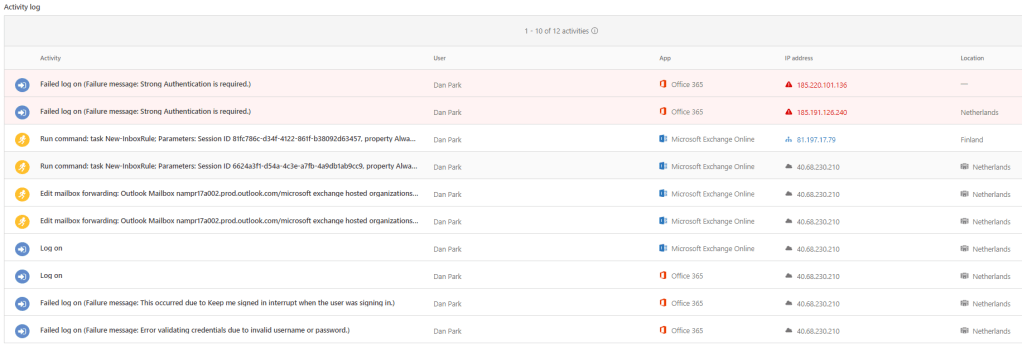

I created targeted attacks with native Microsoft tools and was able to (by accident) get malicious IP address detection. At this time, the malicious IP is Exchange Online. Event logs from MCAS.

Alert and Latency

Sign-in from a malicious IP was logged 9/10/2020, 8:26PM, and the IPC detection 9/11/2020, 6:12PM. In this case, the detection and alert were mitigated because the user performed password change.

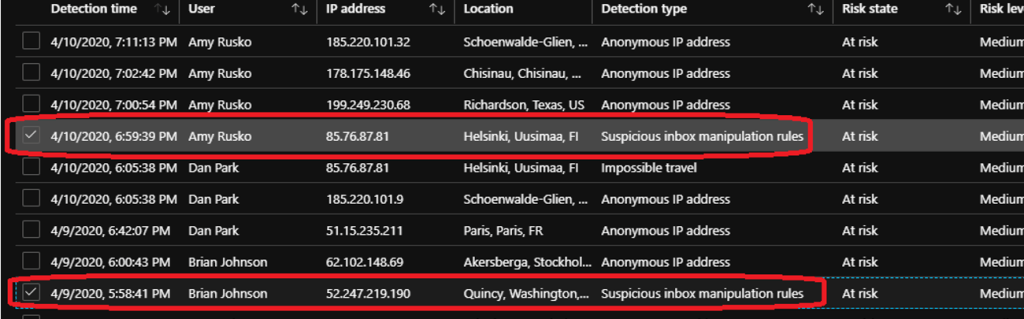

Suspicious inbox manipulation rules (Offline)

According to Microsoft: “This detection is discovered by Microsoft Cloud App Security (MCAS). This detection profiles your environment and triggers alerts when suspicious rules that delete or move messages or folders are set on a user’s inbox. This detection may indicate that the user’s account is compromised, that messages are being intentionally hidden, and that the mailbox is being used to distribute spam or malware in your organization”.

Activity

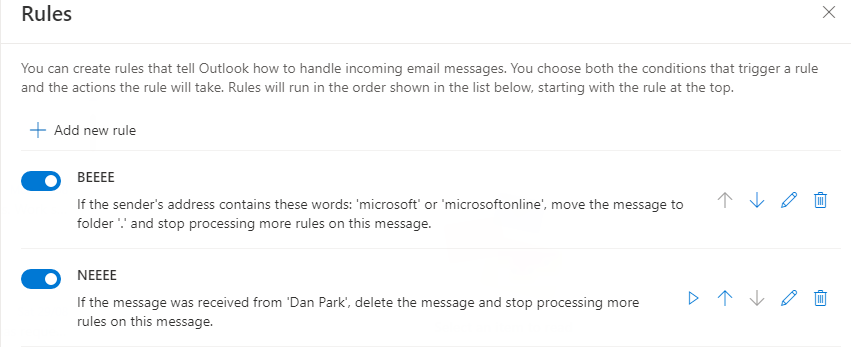

For testing purposes, I created two (2) inbox rules that are treated as suspicious. If you want to know more about the rule logic I recommend reading this blog.

Alert and Latency

When testing mailbox detections (even though this is not forwarding rule), take into account that “Cloud App Security only alerts you for each forwarding rule that is identified as suspicious, based on the typical behavior for the user”.

This basically means, that if you create the same forwarding rule over and over again it won’t be treated as offence.

Inbox rules created:

- The activity made in user mailbox 5:36 PM

Detection:

The activity received from O365 Management Activity API was pretty fast, in 20min timeframe.

- Alert was raised at 5:58PM

The risk in the Identity Protection was updated approximately 3 hours after MCAS detection and it contains “suspicious inbox manipulation rules” detection. As you might notice, pictures are from a time before summer vacation. For some reason, I was not able to re-procedure the detection in my demo tenants. I have a discussion going on with Microsoft about this issue and will update the blog when have something to share.

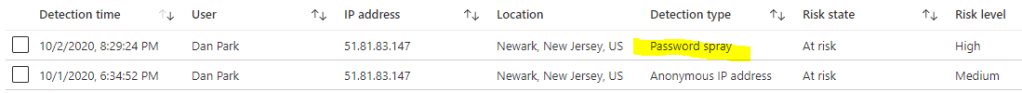

Password spray (Offline)

According to Microsoft: “A password spray attack is where multiple usernames are attacked using common passwords in a unified brute force manner to gain unauthorized access. This risk detection is triggered when a password spray attack has been performed”.

Activity

I did use multiple tools to test the detection rules but this Daniel Chronlund’s PowerShell script is quite handy.

I selected 10 test users from my tenant and four (4) different passwords for testing purposes. The activity was made in multiple parts.

Alert and Latency

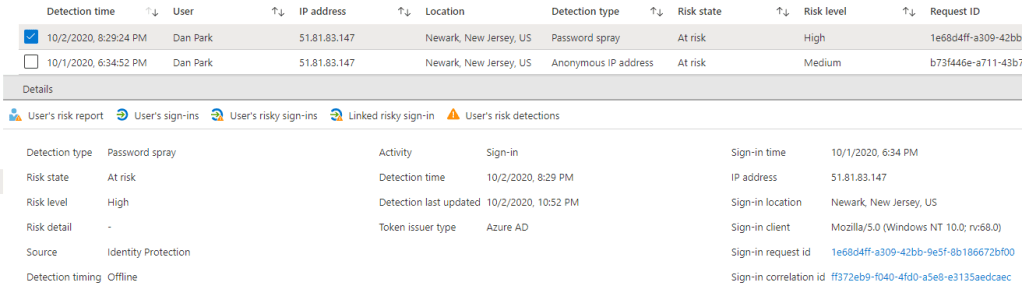

To the best of my knowledge, the “Password Spray” detection is not rolled out to every Azure AD tenant Identity Protection instance. My demo tenant might be in the first ring (located in the US) because 10/5/2020 received a detection to Identity Protection. As you can see, the user risk is “High” because of a successful login attempt after a series of failed ones.

- A successful password spray attack (Sign-in risk detection) will change the user risk to “high”

- An unsuccessful attack will not change the user risk

- MFA or other interrupts don’t prevent MS from flagging the user as high risk

Also, Microsoft Cloud App Security has a detection rule for password spray activity (Multiple failed login attempts built-in rule) but in my MCAS environment, the alert was not created.

In MCAS, the reason might be that it didn’t receive any information about the failed logins against the endpoint I used. Why – this is a good question that I need to address later on. The failed logins are found from Unified Audit Logs and in such case, those should be found from MCAS also.

I can always trust to cloud-based SIEM, Azure Sentinel in here. Also, the ticket information was enriched by a playbook to get IP information from Virus Total service.

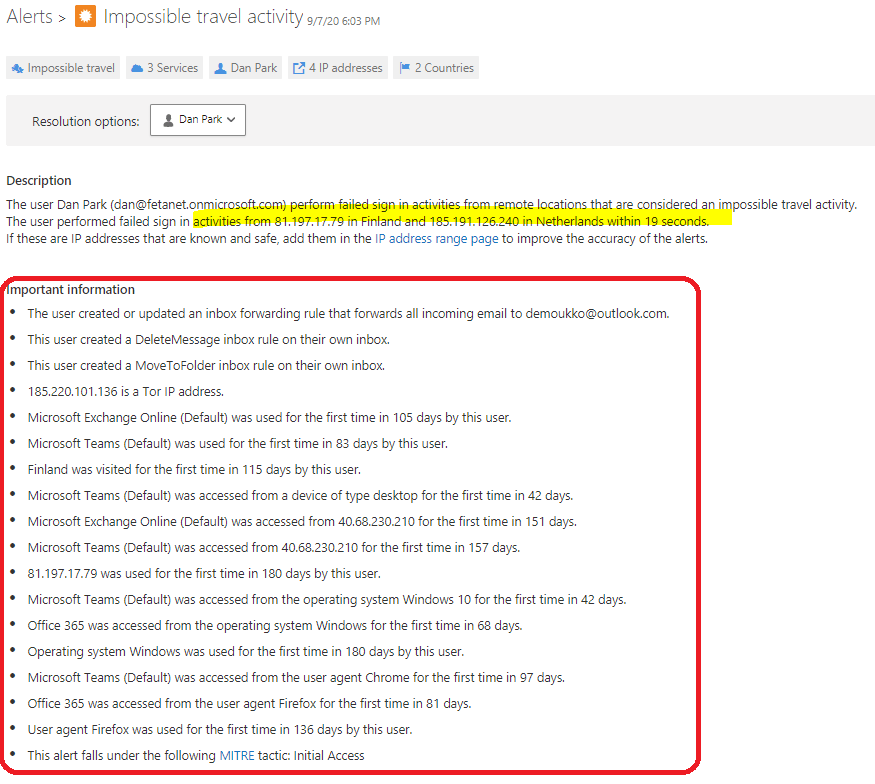

Impossible travel (Offline)

This detection is covered by Microsoft Cloud App Security but the activity is also seen in the Identity Protection side. According to Microsoft: “This detection identifies two user activities (is a single or multiple sessions) originating from geographically distant locations within a time period shorter than the time it would have taken the user to travel from the first location to the second, indicating that a different user is using the same credentials”.

Activity

To test the impossible travel detection I used multiple mechanisms to “travel” all over the globe.

- Azure VM’s in a location where user hasn’t logged in before

- VPN software and different break-points (Singapore, Japan & West US)

- OpenVPN running in Azure (Japan & East US)

Alert and Latency

As you can see from the pictures below there are many activities related to this alert. MCAS correlates the data and adds important information from the user activities to the alert itself such as:

- Suspicious activities in mailbox

- Tor network address

- O365 collaboration service usage

- When services were last accessed

- MITRE tactics information

Summary

This table summarizes the detections and findings around them in my demo environment.

- Activity – the suspicious activity

- Detection time from Identity Protection

- N/A means that I was not able to get detection based on the rule

| Detection Name | Detection Type | Activity | Detection Time |

| Anonymous IP address | Real-time | 5:24PM | No latency, real-time detection |

| Atypical travel | Offline | 1st sign-in 3:01PM 2nd sign-in 3:18 | 3:21PM |

| Malware linked IP address | Offline | N/A | N/A |

| Unfamiliar sign-in properties | Real-time | 9:37AM | No latency, real-time detection |

| Admin confirmed user compromised | Offline | N/A | No latency, real-time detection |

| Malicious IP address | Offline | 09/10/2020, 8:26PM | 09/11/2020 6:12PM |

| Suspicious inbox manipulation rules | Offline | 5:36PM | 5:58PM |

| Password spray | Offline | 10/01/2020 6:34PM | 10/02/2020 8:29PM |

| Impossible travel | Offline | 09/07/2020 5:52PM | 09/07/2020 6:03PM in MCAS |

| Leaked credentials | Offline | N/A | N/A |

| Azure AD threat intelligence | Offline | N/A | N/A |

Until next time!